Outsourced Intake QA: The Scorecard, Call Audits, and KPIs That Keep Performance Consistent

By Adom FrancisLast modified: April 21, 2026

Voted Top Call Center for 2024 by Forbes

Virtual Receptionists

Save time and money with our virtual receptionists.

AI Receptionist

AI-powered receptionist that answers, routes, and qualifies calls 24/7.

Enterprise Solutions

Solutions designed to scale with your organization’s needs.

Legal Services

Our virtual legal receptionists are experts in legal intake.

Last modified: April 21, 2026

When intake is distributed across locations, shifts, and vendors, performance can drift fast. The result is usually inconsistent lead handling, uneven client experience, and avoidable compliance risk, even when you have good people and good intentions.

This guide is for enterprise and multi-location service businesses, legal intake-heavy firms, and high-volume practices that need reliable overflow and after-hours coverage. You will learn how to design an outsourced intake QA system that is measurable, coachable, and scalable: a call center quality assurance scorecard, intake call monitoring with consistent audits, QA calibration sessions, and the KPIs that actually reflect intake outcomes.

Most intake QA problems are not caused by a lack of monitoring. They come from unclear definitions of “good,” inconsistent scoring between auditors, and KPIs that reward speed over accuracy.

Outsourcing adds two more failure points: (1) distance between your business goals and the agent’s day-to-day decisions, and (2) a change-control gap where scripts, requirements, or eligibility rules evolve faster than training and scorecards.

High variance between sites, teams, or shifts, even when averages look fine.

Rework from missing fields, incomplete notes, or misrouted matters.

Disputed scores because auditors interpret criteria differently.

“Green dashboards” while stakeholders still complain about bad leads or poor handoffs.

QA is shifting from “spot-checking calls” to running a performance operating system. Enterprises are asking QA to answer a tougher question: can we trust intake outcomes to stay consistent while we scale volume, expand hours, and add locations?

Three practical changes are driving this shift: more complex intake workflows, more cross-functional stakeholders (marketing, compliance, clinical/legal operations), and more automation in scoring and coaching. If you introduce AI-assisted QA, align your approach to risk and governance principles such as the NIST AI Risk Management Framework so automation improves consistency without creating opaque decisions or unmanaged failure modes.

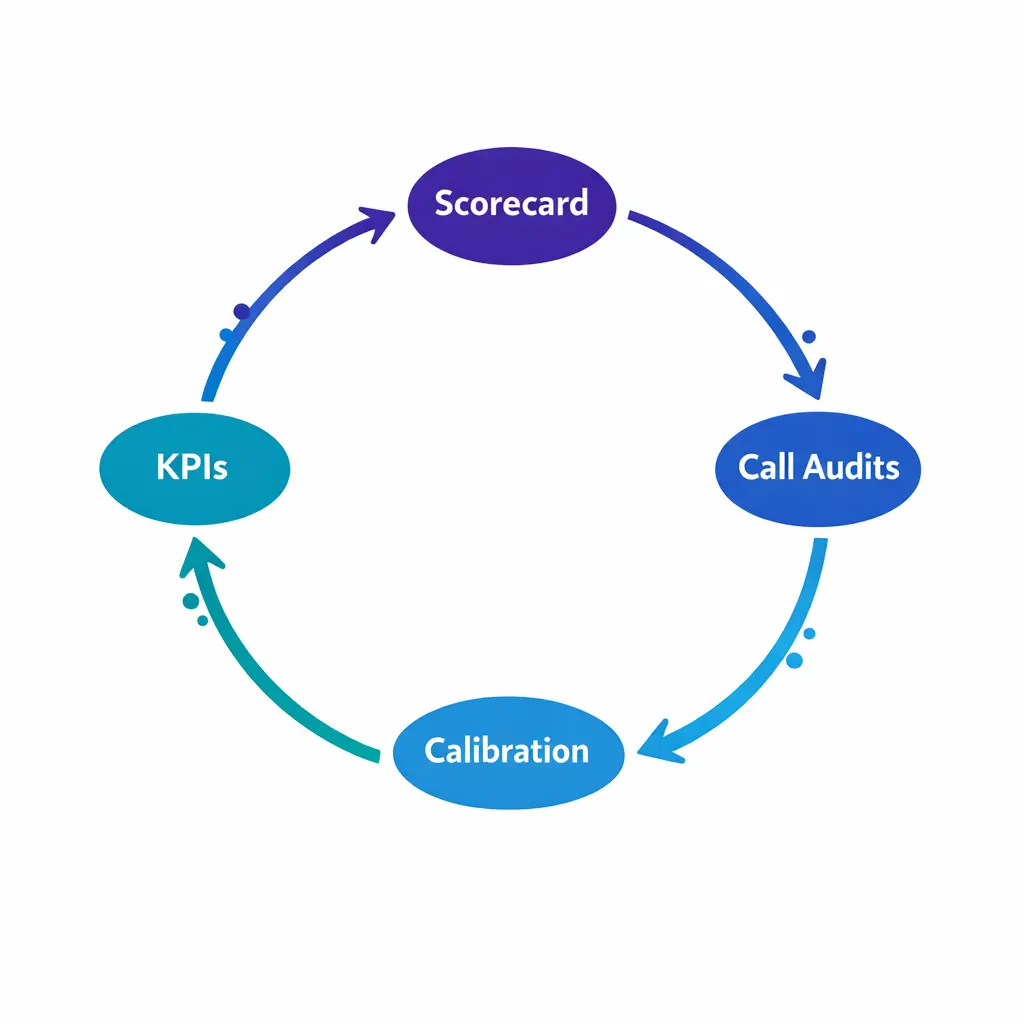

Reliable outsourced intake QA is not a single “quality score.” It is a closed-loop system that connects observed behavior to outcomes, then turns variance into coaching and process fixes.

Scorecard: defines what “good” looks like, including critical errors and weights.

Call audits: consistent sampling, scoring, and documentation across auditors and teams.

QA calibration sessions: keeps scoring aligned as scripts and edge cases evolve.

KPIs: tracks both customer experience and intake integrity (accuracy, eligibility, next steps).

A strong call center quality assurance scorecard is written for decisions, not for trivia. It should measure the behaviors that change whether the caller becomes a qualified lead, a scheduled appointment, or a properly routed matter, with clear evidence standards.

For legal and healthcare-adjacent intake, include explicit criteria for confidentiality and appropriate handling of sensitive information. For law firms, intake must support duties of confidentiality consistent with ABA Model Rule 1.6 (Confidentiality of Information), and for covered healthcare workflows, privacy expectations should be designed with the HIPAA Privacy Rule in mind.

Most intake teams get better results with 6 to 10 categories, each with observable criteria and examples. Overly granular scorecards create auditor variance and agent confusion, especially across outsourced teams.

Opening and control: professional greeting, sets expectations, confirms reason for call.

Discovery and issue spotting: asks the right questions in the right order without leading.

Accuracy and completeness: captures required data fields and notes with minimal rework risk.

Process compliance: required disclosures, permission steps, and approved scripting where applicable.

Empathy and clarity: demonstrates active listening, explains next steps plainly.

Handoff quality: schedules, routes, or escalates correctly with complete documentation.

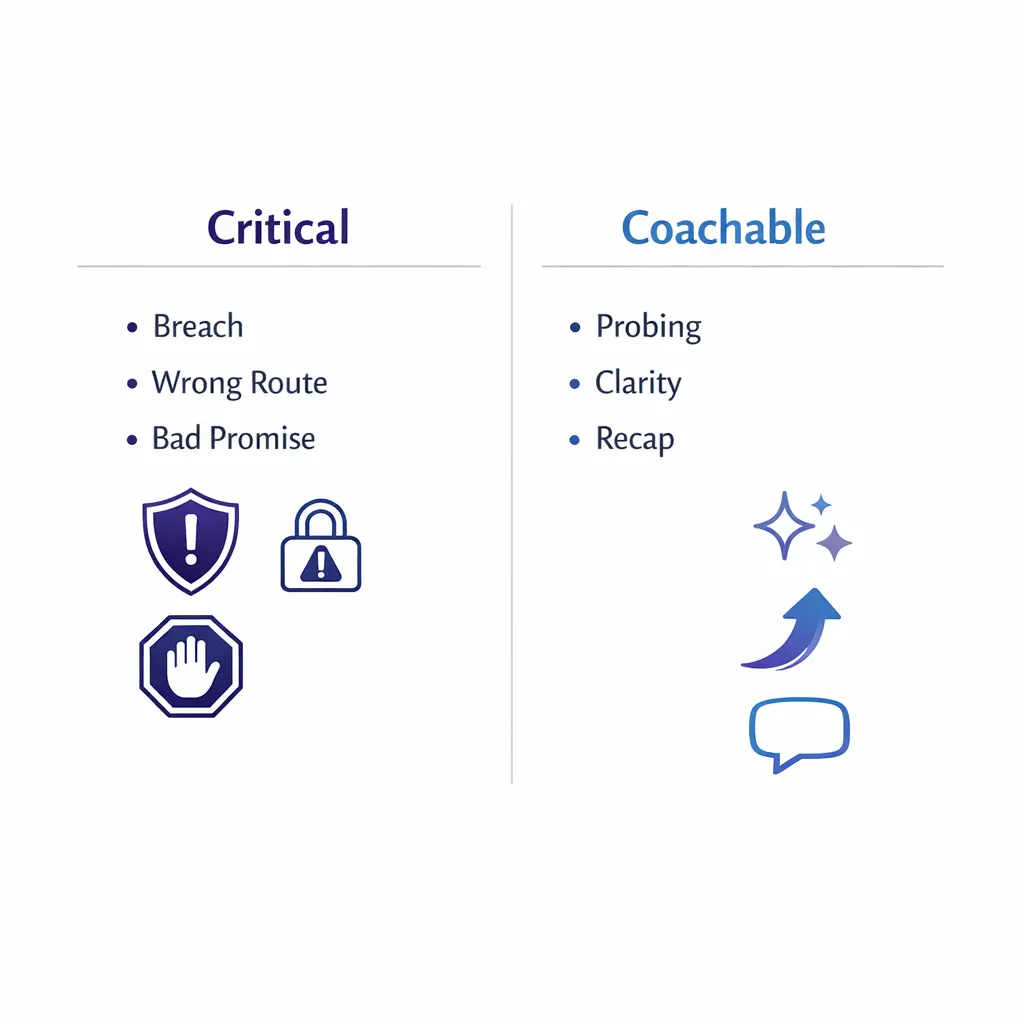

Not all misses are equal. Your outsourced intake QA scorecard should explicitly separate “this call cannot be accepted” failures from “this needs coaching” behaviors.

Critical errors: confidentiality breach, incorrect eligibility decision, unapproved promises, improper advice, wrong routing that causes time loss, missing required permission steps where your process requires them.

Coachable misses: weak probing, poor call control, unclear next steps, incomplete recap, inconsistent tone.

If lead intake accuracy is the business priority, the scorecard must make accuracy expensive to miss. A common pattern is to weight “accuracy and completeness” and “handoff quality” higher than “soft skills,” while still requiring a minimum bar for empathy and clarity.

Document weight decisions in plain language so your internal stakeholders and your BPO partner can defend the scorecard when priorities change.

Write criteria so two auditors can score the same call within a tight range. Replace vague phrasing like “good rapport” with “used caller name, acknowledged concern, and confirmed next step before ending the call.”

For each category, add examples of “meets,” “misses,” and “exceeds,” and specify what counts as acceptable documentation in the CRM or intake platform.

Intake call monitoring should create a reliable signal, not noise. That means your audit program must be consistent in sampling, scoring, and how feedback is recorded and delivered.

Audits are also where outsourced relationships succeed or fail. If your vendor feels audits are arbitrary, they will optimize for score defense, not performance improvement.

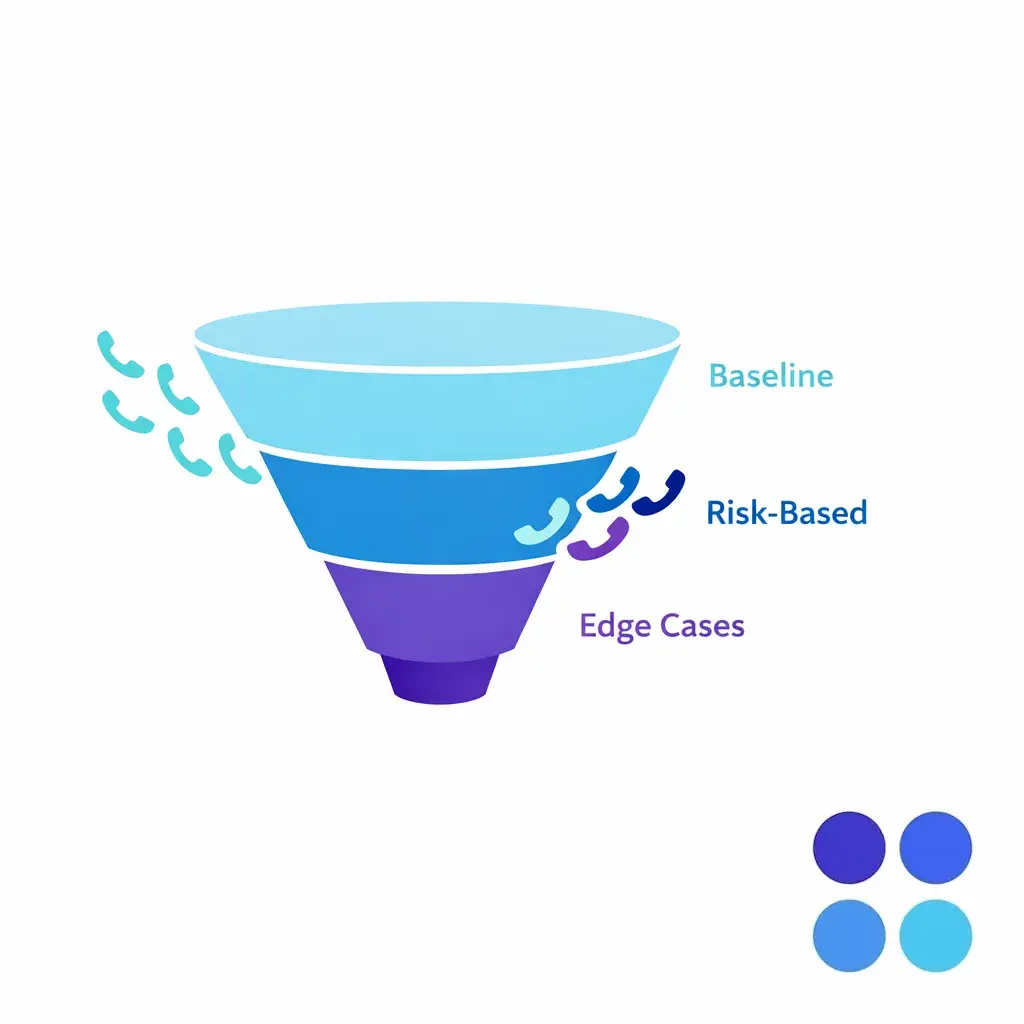

Rather than trying to “monitor everything,” choose a sampling approach that scales. Use higher audit frequency during onboarding, process changes, seasonal surges, or when a location’s KPIs start drifting.

Baseline sampling: audit enough interactions per agent to see patterns, not one-off exceptions.

Risk-based sampling: oversample high-stakes call types (high-value cases, clinical escalations, safety issues).

Edge-case sampling: include unusual scenarios that reveal script gaps and routing ambiguity.

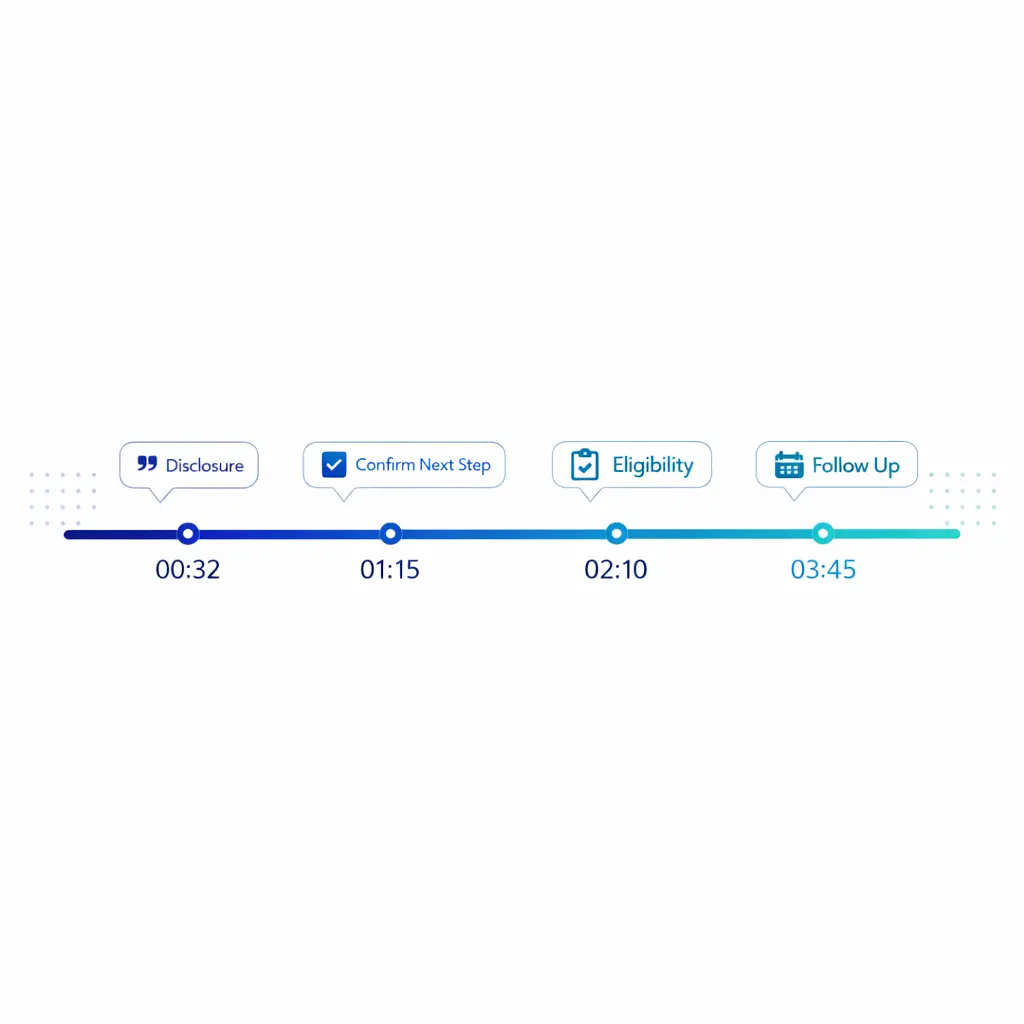

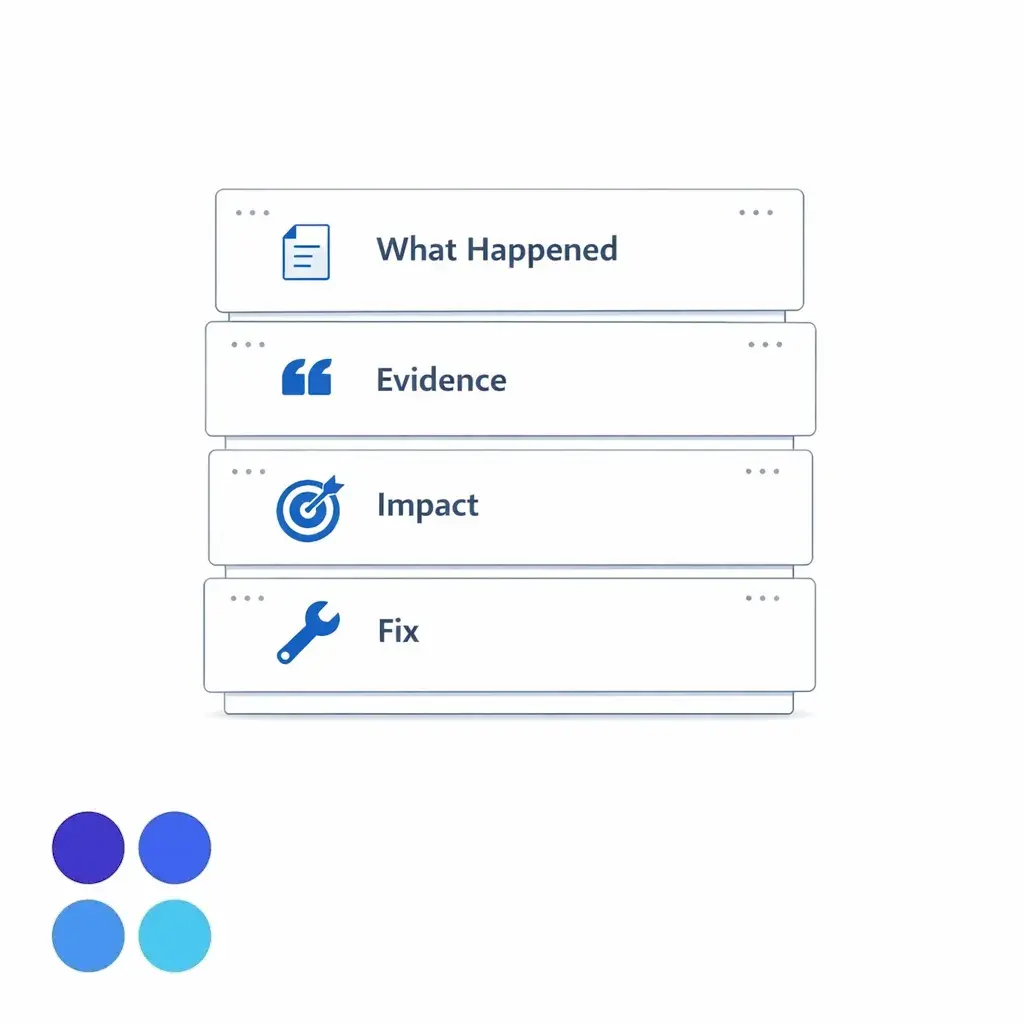

Audit notes should be structured so they can be used for coaching and trend analysis. If your audit output is just a numeric score, your coaching program will stall.

What happened: short summary of the call and outcome.

Evidence: direct quotes or time stamps tied to scorecard criteria.

Impact: why this matters (conversion, accuracy, caller experience, compliance risk).

Fix: the exact behavior to repeat or change on the next call.

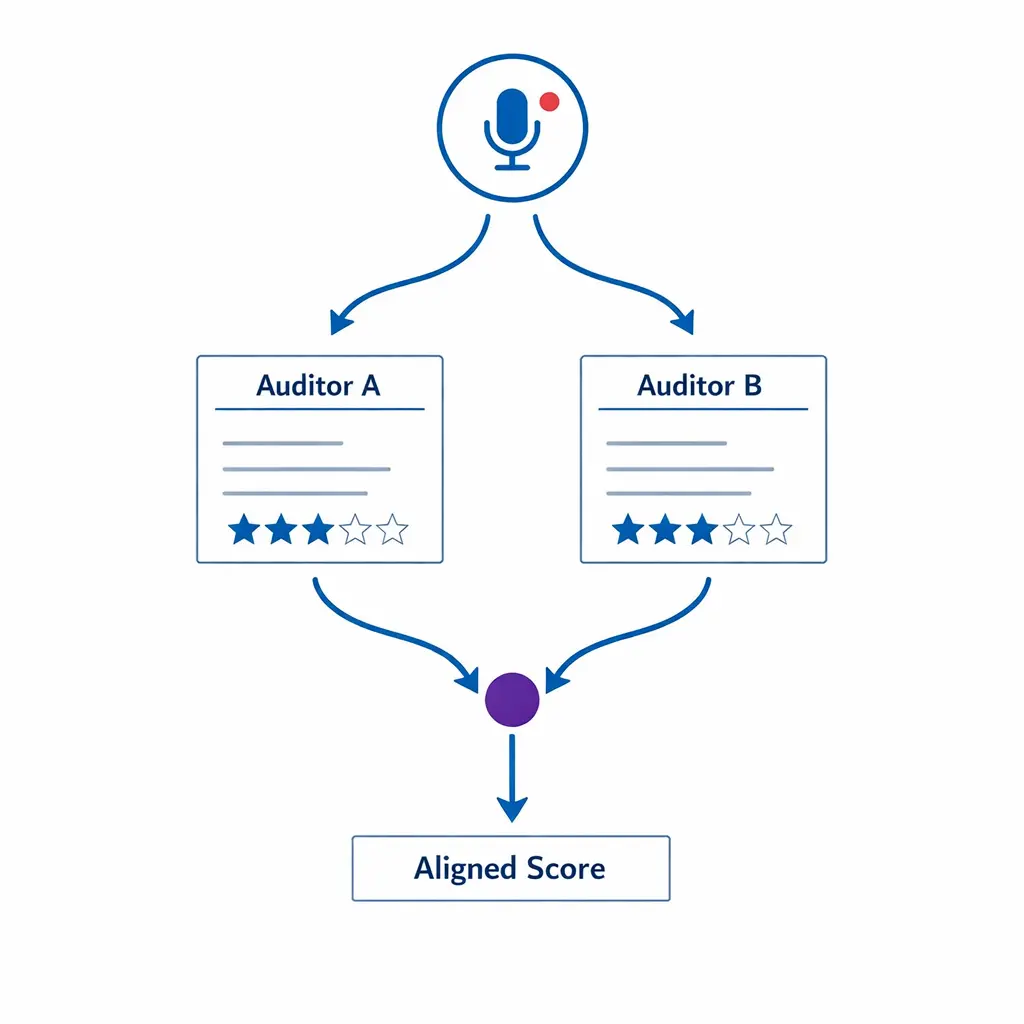

Build in periodic “double-scored” audits where two auditors independently score the same call, then reconcile differences. This is the fastest way to expose unclear criteria and keep outsourced intake QA scoring defensible.

If your workflow involves regulated healthcare data, confirm that your vendor relationship and operational practices align with your compliance obligations, including whether the vendor functions as a business associate under HHS guidance on HIPAA business associates. For legal intake, treat recordings, notes, and transcripts as sensitive work product and control access accordingly.

QA calibration sessions are where the scorecard becomes real. They align interpretation, update edge-case handling, and prevent “grade inflation” or overly harsh scoring when conditions change.

For outsourced teams, calibration also improves trust. Everyone leaves with a shared definition of what counts as acceptable performance and what requires immediate correction.

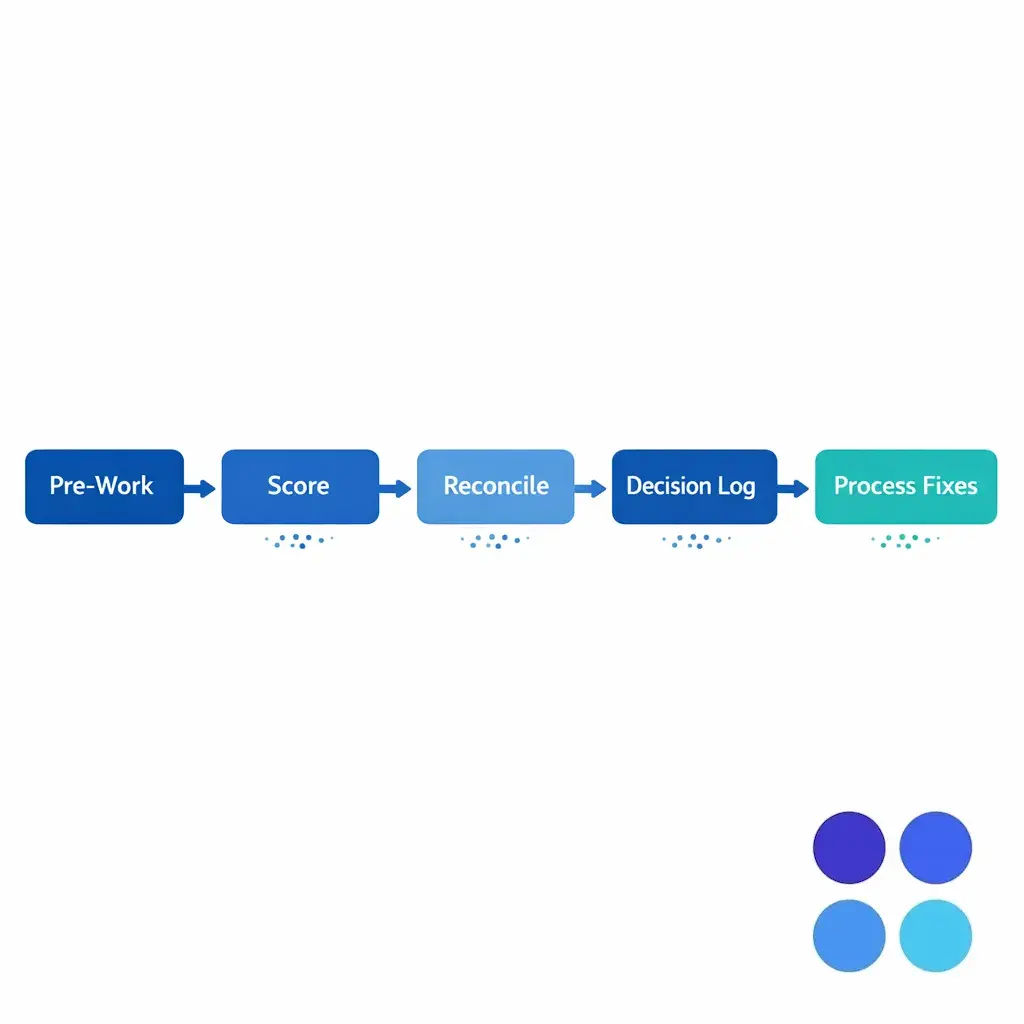

Pre-work: choose 3 to 6 calls representing typical scenarios and one edge case.

Independent scoring: each participant scores using the same scorecard.

Reconciliation: discuss deltas category-by-category and capture rule clarifications.

Decision log: record how to score specific scenarios going forward.

Process feedback: identify scripting gaps, unclear eligibility rules, or CRM fields that cause errors.

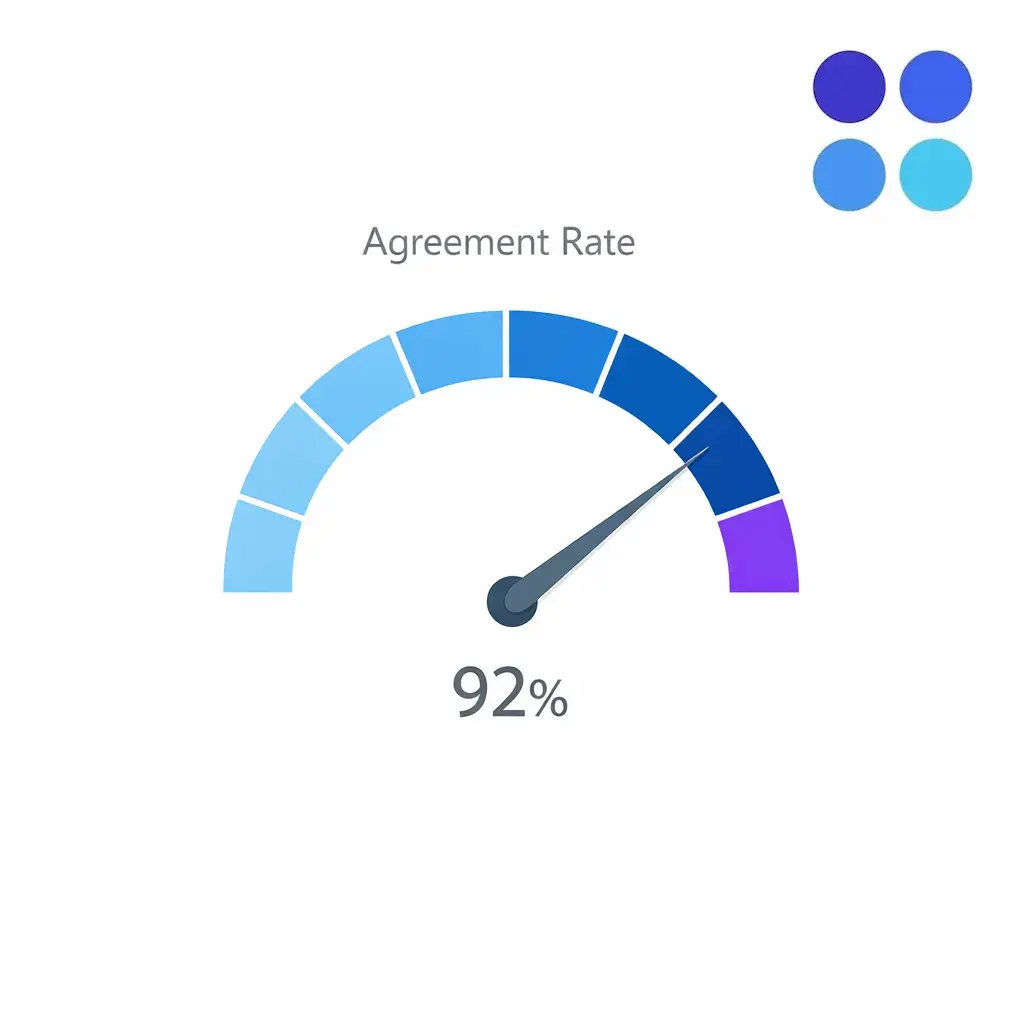

Track “auditor agreement rate” as an internal QA health metric.

If agreement is slipping, it is a signal that the scorecard has become ambiguous or the workflow has changed without documentation updates.

Audits do not improve performance unless coaching is specific, timely, and reinforced. A good call center coaching program uses QA results to build habits, not to punish misses.

For outsourced intake QA, coaching should be aligned across your internal stakeholders and the BPO’s team leads. Otherwise, agents receive mixed messages and optimize for the loudest feedback source.

Focus: choose the single highest-impact behavior from the audit.

Model: show what “good” sounds like using a call clip or a script snippet.

Practice: role-play the exact moment where the call went off track.

Commit: define what the agent will do on the next 5 calls.

Verify: re-audit quickly to confirm change, not weeks later.

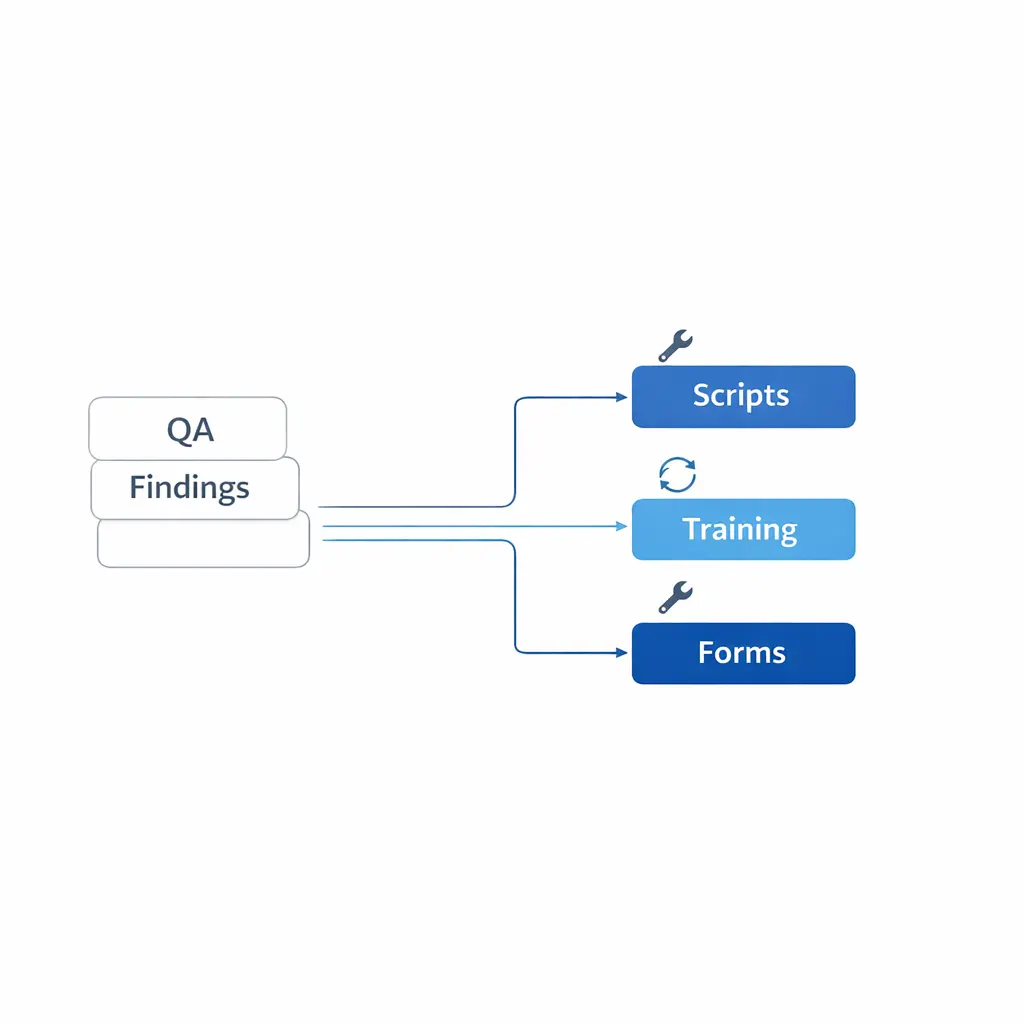

If multiple agents fail the same criterion, treat it as a process defect. Common causes include unclear eligibility rules, inconsistent scripting across campaigns, or intake forms that do not match what callers actually say.

Feed these findings into your change-control process so training, scripts, and scorecards are updated together.

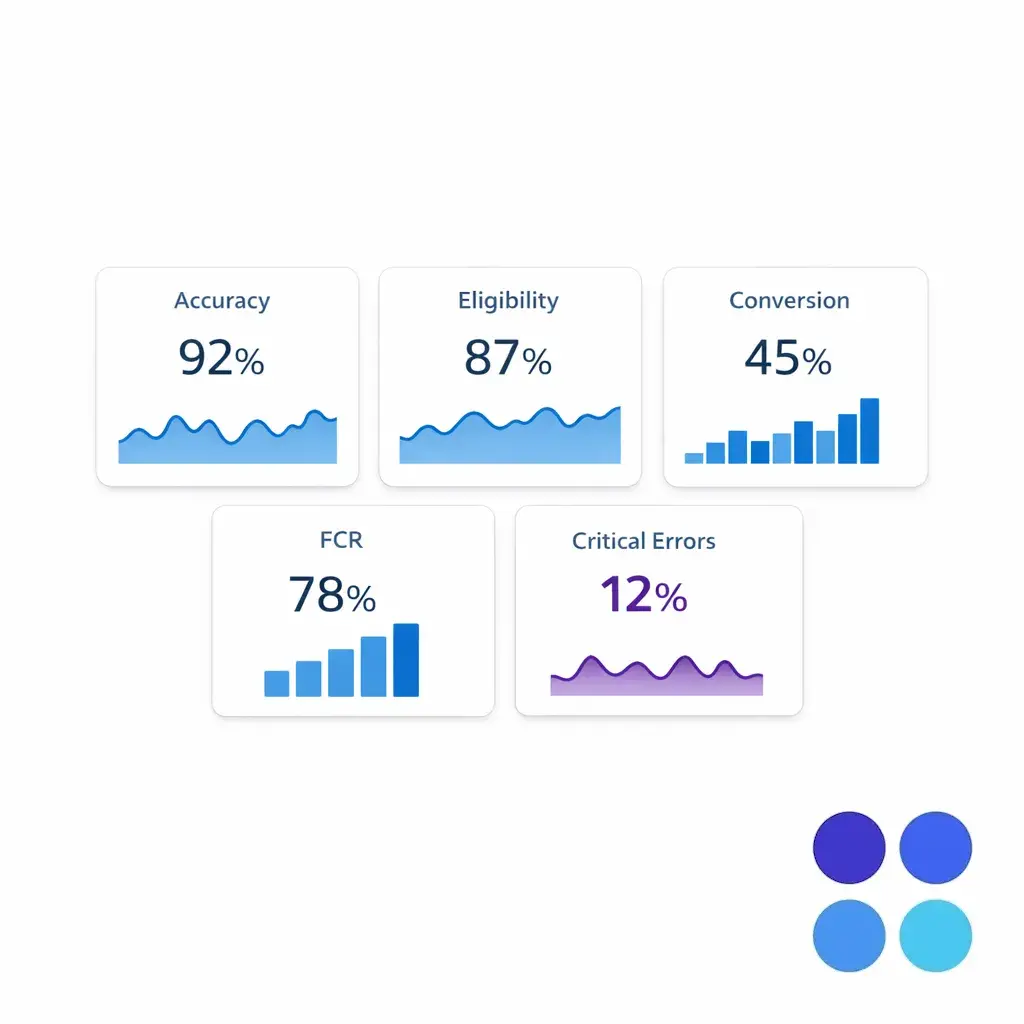

KPIs should do two jobs at once: protect the customer experience and protect intake integrity. For enterprise intake, a small set of well-defined measures is usually better than a large dashboard where teams can hide behind averages.

Use KPIs to spot drift early, then use audits to diagnose the cause. KPIs tell you “where to look,” while QA tells you “what to fix.”

Lead intake accuracy: percent of audited interactions with all required fields correct and complete.

Eligibility decision accuracy: whether calls were qualified or disqualified correctly based on your rules.

Conversion to next step: scheduled appointment, retained consult, signed intake packet, or completed handoff.

First call resolution (FCR): whether the caller’s primary need was handled without avoidable repeat contact.

Compliance-critical error rate: frequency of auto-fail events per audited sample.

Speed to answer and abandonment: indicates coverage and staffing fit.

Handle time distribution: watch outliers rather than chasing a single average.

After-call work and documentation timeliness: affects downstream teams and follow-up speed.

Escalation rate: can be healthy (using escalation correctly) or unhealthy (agents avoiding decisions).

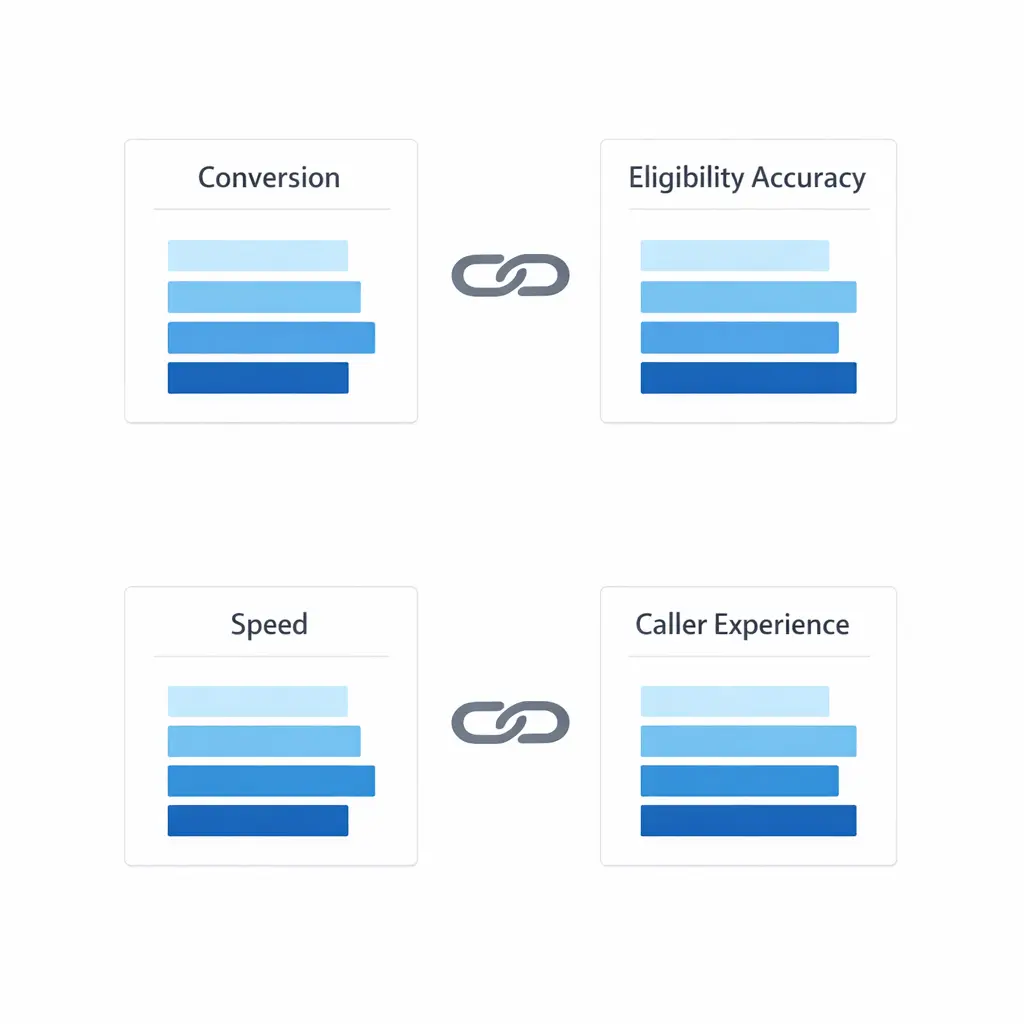

If you reward short handle times without pairing it with lead intake accuracy, you will get faster calls and worse data. If you reward conversion without controlling eligibility accuracy, you will get more “wins” that downstream teams reject.

Design KPI pairs that balance each other, such as conversion with accuracy, and speed with caller experience measures.

BPO performance management works when expectations are predictable and decisions are documented. That means a cadence that connects day-to-day metrics to weekly coaching and monthly process improvements.

Daily: coverage health, queue performance, urgent exceptions, and critical error alerts.

Weekly: QA trends, coaching completion, calibration outcomes, and top three process blockers.

Monthly: scorecard tuning, training refreshers, and workflow changes (scripts, routing, eligibility).

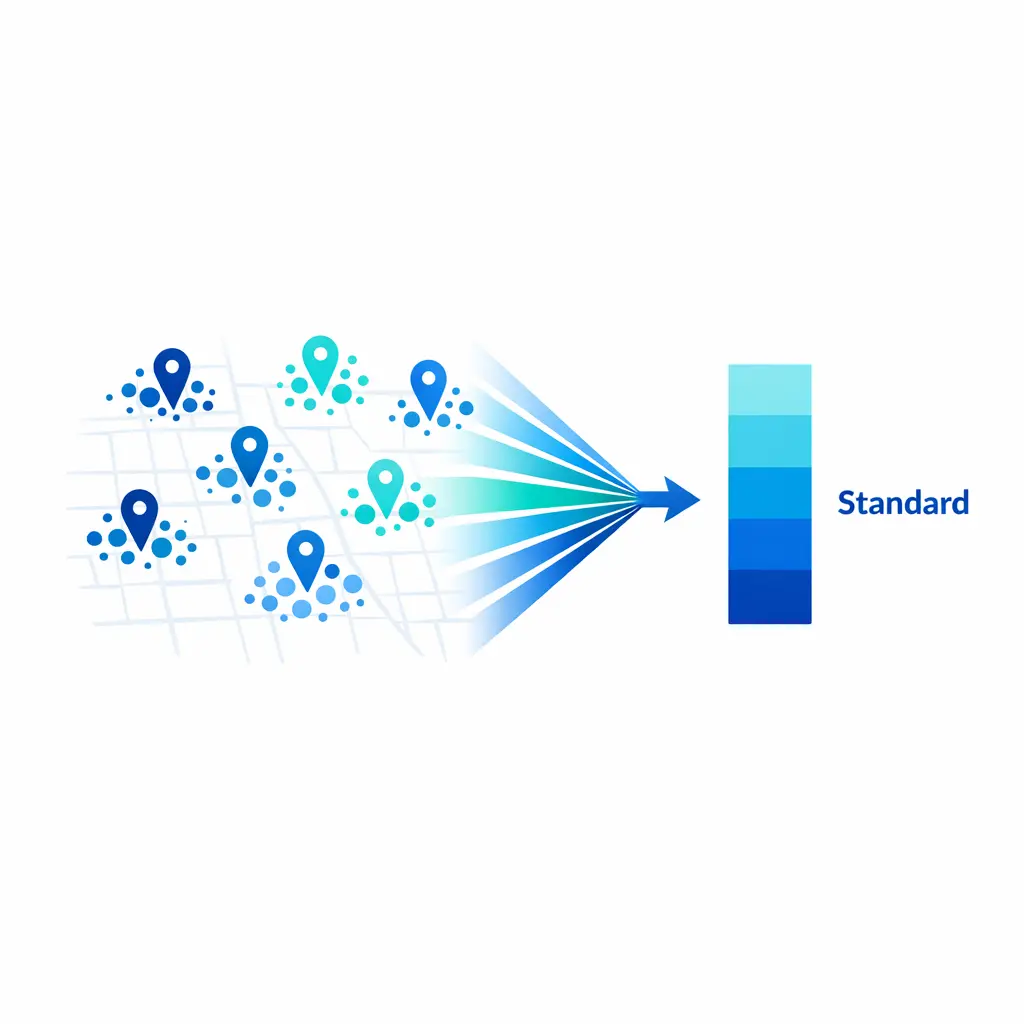

Quarterly: target reset, expansion planning, and multi-location standardization decisions.

Clarify who owns script updates, intake form changes, QA scoring rules, and escalation decisions. When ownership is unclear, outsourced teams stall while internal stakeholders debate priorities.

Document a simple decision path for “what changes require retraining” versus “what can be handled via an agent memo,” and tie those changes to calibration.

Most organizations do not fail at outsourced intake QA because they lack tools. They fail because they treat QA as a policing function instead of a systems function.

Misconception: “A single quality score is enough.” Reality: you need critical errors, category trends, and coaching follow-through.

Misconception: “More audits automatically improve quality.” Reality: unclear criteria and weak coaching create more noise, not better behavior.

Misconception: “Outsourcing means less control.” Reality: a well-run scorecard, calibration, and KPI cadence often increases control versus ad hoc internal monitoring.

Misconception: “Scripts solve consistency.” Reality: scripts help, but consistency comes from decision rules, practice, and documented edge-case handling.

Mistake: changing eligibility rules without updating the scorecard, training, and audit notes templates at the same time.

Use this contact center QA checklist to stand up (or tighten) outsourced intake QA in a way that scales across locations and vendors. If you already have QA in place, treat this as a gap analysis.

Define outcomes: pick 2 to 4 intake outcomes that matter most (qualified lead, scheduled consult, correct routing, complete documentation).

Draft the scorecard: 6 to 10 categories, clear evidence standards, and a separate critical-error list.

Set weights: make accuracy and handoff quality expensive to miss if downstream teams rely on intake data.

Choose sampling rules: baseline + risk-based sampling, plus temporary increases during change periods.

Standardize audit notes: require “what happened, evidence, impact, fix” on every audit.

Schedule calibration: put QA calibration sessions on the calendar and maintain a decision log.

Launch coaching: one skill at a time, practice-based, with quick re-audits to verify change.

Finalize KPI pairs: balance conversion with eligibility/accuracy and speed with caller experience.

Operationalize governance: weekly QA trend review, monthly process tuning, clear owners for changes.

Audit the system: periodically review auditor agreement and whether QA findings lead to process fixes.

If you are trying to standardize intake across multiple sites, extended hours, or overflow volume, Go Answer can help you build an outsourced intake QA program that stays consistent as you scale. The goal is simple: fewer preventable misses, cleaner handoffs, and performance you can trust week after week.

Request Pricing or Book a Discovery Call to talk through your intake workflow, your QA scorecard needs, and the KPIs you want to manage to. If you prefer to start with context first, you can also see how Go Answer works and then talk to a specialist about the right coverage and quality model for your teams.

Learn why thousands of companies rely on Go Answer.

Try us risk-free for 14 days!

Enjoy our risk-free trial for 14 days or 200 minutes, whichever comes first.

Have more questions? Call us at 888-462-6793

Learn why thousands of companies rely on Go Answer.

Have more questions? Call us at 888-462-6793

If you would like to get in contact with a Go Answer representative please give us a call, chat or email.

Thanks for your interest!

A representative will be reaching out to you shortly.

Have more questions? call us on 888-462-6793